The advancement of telegrams to fiber optics has been a story of faster information transport. All sorts of wonders have occurred – news propagates at lightning speed, information flows unhindered, and the market is increasingly efficient and correct. It takes mere seconds, or less, before a piece of news is priced in to a stock by high frequency traders. The advantage that each unique data point brings is competed away. And the propagation of information allows the entire system to reach swifter consensus on the correct price of a stock, thus making the system more capable of seeking truth.

However, this is not an unalloyed good to all. Traders in markets must accurately weigh their confidence in a particular theory and update it precisely by how much the information indicates is appropriate. Those who are incorrect are outcompeted by traders who are. This leads to a miraculous and efficient “price discovery” mechanism of markets, but this is notably absent in other, less efficient human systems.

Take a simple example: regular old groups of people. Because people aren’t destroyed by holding incorrect opinions, the truth seeking ability of humans isn’t frequently tested. This allows for our charming human biases to prosper. One of our primary biases is the all-too-human fear of uncertainty.

Humans prefer to form judgements fast, and those judgements are almost always a firm, confident decision that one side is right and the other is wrong. This is reflected in that age-old phrase: you are asked to “suspend judgement”, and not “abstain from judgement”. There is something inherently temporary about our ability to stay uncertain. We can hold off for a while, but in the end, people think in binaries, not in probabilities.

There’s lots of reasons why humans hate uncertainty. Uncertainty gives you no stable ground to build on – if you don’t know what will happen, all possibilities are in play and potential options multiply. In contrast, thinking through just one is faster, more calorically efficient, and most importantly, just easier.

The combination of fast information flows and our failure to correctly regard uncertainty results in a characteristic symptom: overconfident dogmas that change quickly. We are in total consensus, yet what the consensus is changes at dizzying speeds.

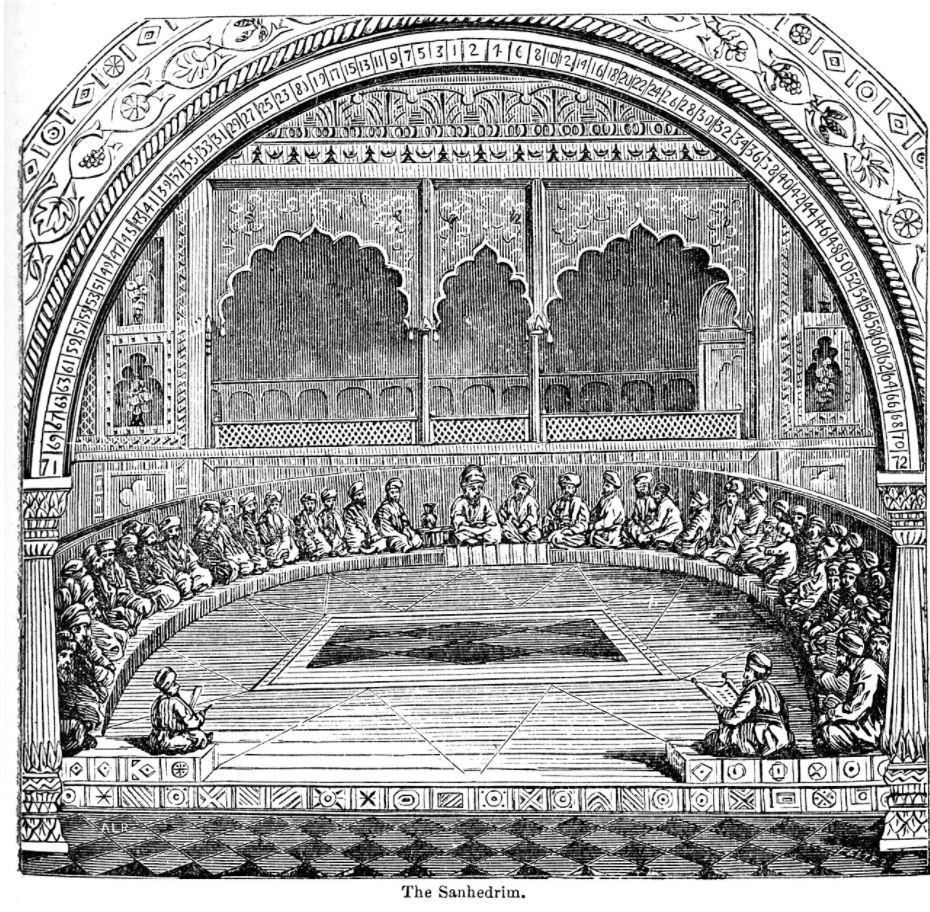

How did societies in the past deal with consensus? Consdier the ancient Jewish court, the Sanhedrin, where assemblies of elders heard cases and made judgements. The court was, by many accounts, the last word on important matters like judgements of the law.

Most notably to us, the Talmud, a source of Jewish law, has a perplexing rule about consensus. It says that when the court reaches a verdict of unanimous guilt of the defendant, that verdict must be thrown out. Precisely when the case looks most convincing, the defendant has to be set free. An apparent contradiction on its face, scholars reason that it is precisely this unanimity of the judges which actually indicates a fatal flaw in the process.

A Sanhedrin judge must show himself to be an able thinker before being allowed onto the bench of the court, being competent enough to argue for even an impossible claim, such as one that contradicts the explicit text of the Bible. The good judge is therefore one that can argue for both sides, even when the evidence for one of the sides seems overwhelming and totally air-tight. A unanimous verdict, then, is a haunting sign that the judges have failed at this virtue, and have lost their ability to seek truth.

The change from high confidence to total confidence in an opinion is not a change of degree, but of kind. Opinions in the majority are likely to be true, while a total consensus hints that the entire system of truth seeking is totally broken.

The Talmud rule could be read as a warning about our social climate. The fervent and dogmatic consensus of our society indicates that we have lost the ability to make sound judgements. To become a judge on the Sanhedrin entailed passing a high bar, proving your capability to resist groupthink.

We shouldn’t expect common citizens to pass such a bar. It seems particularly useless to exhort people to keep an open mind. The little we do know about human psychology tells us that biases are difficult to fix, and to expect that, when people form small groups, the groups will naturally tend to find consensus among its members. Healthy systems seek truth by instead allowing each group of fervent consensus to balance each other, thus allowing the entire society to become an approximation of the truth.

Our system became broken when the various groups of differing opinion began to merge into one, homogenous mass of consensus. In an environment with fast information flows, ideas could be critiqued all of the time, and groups with any level of differing sincere belief are torn apart. The human bias for groupthink and conformism is supercharged.

Maybe there is something healthier about a world where sharing an idea means waiting days for a letter to be delivered. In the delay, you develop a sense for the true weight of information and the importance of deliberating on new evidence. In contrast, an abundance of communication, especially between small groups, allows not just for the transfer of information but a new mechanism of coordination. Consistent overexposure to new ideas and information means all groups become alike. Group-level consensus, a manageable evil, transforms to society-level consensus, a new kind of beast.

The delays of information transfer in the past weren’t just useless inefficiency: they were the slack in the system that allowed pluralities of opinion to thrive. When groups of people tend towards consensus, the entire system can support truth only by forming subgroups of opposing opinions.

The blind-spot for uncertainty entails that a healthy system must necessarily support pluralities of opinion, and that information should flow between each group slowly. Where more information used to make people smarter, now it just makes us more dumb.